Designing for Scale: How a Shared Commerce Platform Enabled 89 Site Launches in a Single Year

Built a scalable, API‑driven commerce platform that enabled 10 brands to launch across 26 markets, increasing annual launch velocity from 3 to 20 sites and laying the foundation for $1B+ in digital growth.

At a glance

Replaced one-off site builds with a shared commerce platform

Used Clinique Japan as a high-risk pilot to validate reuse

Increased launch velocity from 3 → 20+ → 89 sites/year

Enabled localization and operations without engineering dependency

Launch velocity increased dramatically. The new platform model increased annual launch capacity from roughly 3 sites per year to 20+ initially—and several years later enabled the organization to launch 89 sites in a single year

Successful reuse immediately validated the approach. Within months of launching Clinique Japan, the same foundation was reused to launch an additional brand—proving the platform was a blueprint, not a one‑off solution.

Lower cost of change post‑launch. UX updates, technology enhancements, promotions, and content changes could be introduced globally or locally without extensive rework, reducing ongoing maintenance burden.

Localization shifted left. Content localization became faster and less engineering‑dependent, allowing regional teams to move at market speed while maintaining consistency and quality.

Operational readiness at launch. Customer service teams and local operations were equipped with the tools they needed from day one, improving support quality and reducing manual workarounds during early market ramp‑up.

Foundation for long‑term growth. The platform became the basis for scaling commerce across additional brands and markets and later supported significant digital revenue growth at the enterprise level.

Business Problem:

Each site launch was treated as a standalone project. Brand and market implementations were built as one‑offs, with limited reuse and significant rework required even when patterns were already proven.

As a result:

New launches were slow, costly, and difficult to scale with business growth

Post‑launch maintenance was fragile, making it hard to roll out UX improvements, new technologies, or enhancements consistently

The platform increasingly acted as a bottleneck rather than an enabler

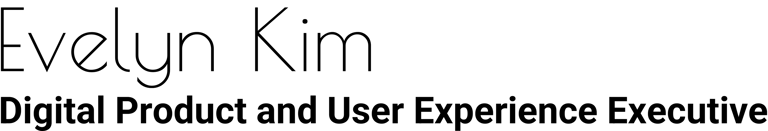

As international expansion accelerated, these issues compounded. Localization remained heavily engineering‑dependent, slowing markets and straining teams. Without a fundamental shift in platform design, the organization risked falling behind its own growth ambitions and adding complexity faster than it could sustainably support.

Strategic Bet:

We made a deliberate decision not to take shortcuts to hit the initial Japan launch. The tempting path was to hard‑code market- or carrier‑specific solutions to get Clinique Japan live quickly. That would have optimized for speed in the moment, but guaranteed rework and fragility immediately after.

Instead, we placed a clear bet:

Optimize for reusability, flexibility, and maintainability over short‑term speed. We designed the platform so core capabilities could be built once and adapted across carriers, brands, and markets without bespoke engineering.

Treat Japan as a blueprint, not an exception. Clinique Japan needed to launch successfully, but the real test was whether the same foundation could support another brand launch just a few months later.

Design for operational handoff. Post‑launch support would transition to a local team, so the system had to be understandable, configurable, and operable by non‑engineers not dependent on the original build team.

This bet required more discipline up front, but it created long‑term leverage: faster subsequent launches, lower maintenance costs, and a platform that scaled with the business instead of being rewritten market by market.

Contraints & Non-Negotiables

We deliberately chose Clinique Japan as the pilot, the hardest test of whether a shared platform could truly scale.

Key constraints shaped every decision:

Mobile complexity was unavoidable. Japan was a mobile‑first market with three distinct carrier technologies. The platform needed to reuse core capabilities while flexing for carrier‑specific requirements.

Testing access was limited. Without direct access to consumer devices, we had to combine emulators with a constrained set of physical phones without compromising launch quality.

Time pressure was real. In parallel, we were standing up new commerce operations, launching a new brand site, and delivering a full end‑to‑end UX.

Partners added risk. New fulfillment and payment partners, many operating in a different language, introduced operational and coordination complexity during a critical launch window.

Reusability was mandatory. Any solution that worked only for Japan would fail. The platform had to support global, brand‑specific, market‑specific, and site‑specific variation without creating future rework.

These constraints made incremental fixes unviable. The solution had to be flexible by design, not customized after the fact.

Outcomes That Mattered:

What We Built:

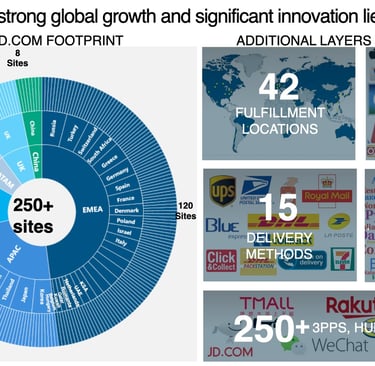

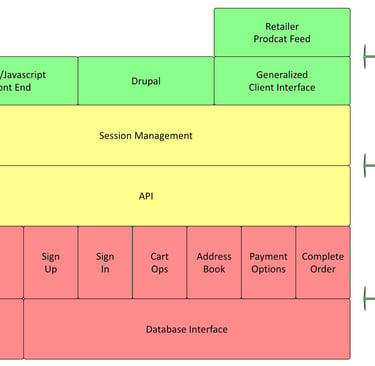

We built a modular, API‑driven commerce platform designed explicitly for reuse, localization, and operational handoff.

Key elements included:

Global content library with localization built in. All copy across Account, Checkout, and support services was managed through a resource‑bundle key/value system. This allowed original and localized content to be viewed side‑by‑side, made missing translations immediately visible (keys rendered when values were absent), and enabled non‑engineers to identify gaps without tooling support.

Clear separation of concerns by level. Core business logic lived at the global layer; brand‑specific requirements (such as style guides) were handled at the brand level; market‑specific needs (privacy, user data, payments) were isolated at the locale level; and site‑specific elements (promotions, inventory) were configured at the site level. This structure maximized reuse while preserving flexibility.

API‑first architecture. We decoupled front‑end experiences from back‑end logic so teams could evolve UX independently while maintaining a consistent, scalable core.

Privacy‑aware data structures. Data models were designed to support varying regional privacy requirements from the outset, reducing future rework as regulations evolved.

Promotion management as a growth lever. We built a new promotion management tool for business users, recognizing it as critical to conversion and as a foundation for future loyalty and brand programs.

CMS tools for non‑engineers. Business and regional teams could manage resource bundles and content updates directly, reducing dependency on engineering for routine changes.

Built‑in testing and validation tools. We created internal tools to validate user and order data capture and to inspect data exchanges between Estée Lauder systems and fulfillment partners, improving confidence, speed, and reliability during launches.

Customer service tooling for assisted commerce. We built dedicated tools for customer service teams to view customer context, support inquiries, and place phone orders on a customer’s behalf, ensuring high‑touch service without breaking the integrity of the commerce system.

Together, these components formed a system that was flexible by design, resilient in operation, and scalable across brands and markets.

How I Lead:

I optimize for long‑term velocity over short‑term wins. I’m willing to invest more discipline upfront if it creates compounding speed and reduces friction for every launch that follows.

I design systems that distribute power, not create dependency. I build platforms, tools, and operating models that enable brand, regional, and service teams to move independently—without constant central escalation.

I treat governance as an enabler, not bureaucracy. Clear boundaries (global, brand, locale, site) create autonomy with guardrails, allowing teams to scale without chaos or rework.